The jerasure.org is setup to host the upstream repositories for the GF-complete and jerasure libraries. Contributors may sign-up or re-use their existing GitHub account. A companion continous integration server runs make check on each merge request.

Continue reading “jerasure.org installation notes”

Customizing the gitlab home page

The customization of the Gitlab home page is a proprietary extension that is not available in the Free Software version. When running Gitlab from docker containers, the home page template needs to be moved to a file that won’t go away with the container:

$ layouts=/home/git/gitlab/app/views/layouts/ $ docker exec gitlab mkdir -p /home/git/data/$layouts $ docker exec gitlab mv $layouts/devise.html.haml /home/git/data/$layouts $ docker exec gitlab ln -s /home/git/data/$layouts/devise.html.haml \ $layouts/devise.html.haml

The template can now be modifed in /opt/gitlab/data/home/git/gitlab/app/views/layouts/ from the host running the container. It is a HAML template which can have raw HTML as long as proper indentation is respected.

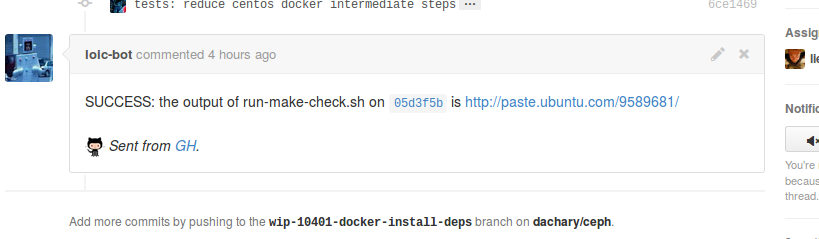

A make check bot for Ceph contributors

The automated make check for Ceph bot runs on Ceph pull requests. It is still experimental and will not be triggered by all pull requests yet.

It does the following:

- Create a docker container (using ceph-test-helper.sh)

- Checkout the merge of the pull request against the destination branch (tested on master, next, giant, firefly)

- Execute run-make-check.sh

- Add a comment to the pull request with a link to the full output of run-make-check.sh.

A use case for developers is:

- write a patch and send a pull request

- switch to another branch and work on another patch while the bot is running

- if the bot reports failure, switch back to the original branch and repush a fix: the bot will notice the repush and run again

It also helps reviewers who can wait until the bot succeeds before looking at the patch closely.

Continue reading “A make check bot for Ceph contributors”

Teuthology docker targets hack (3/5)

The teuthology container hack is improved so each Ceph command is run via docker exec -i which can read from stdin as of docker 1.4 released in December 2014.

It can run the following job

machine_type: container

os_type: ubuntu

os_version: "14.04"

suite_path: /home/loic/software/ceph/ceph-qa-suite

roles:

- - mon.a

- osd.0

- osd.1

- client.0

overrides:

install:

ceph:

branch: master

ceph:

wait-for-scrub: false

tasks:

- install:

- ceph:

under one minute, when repeated a second time and the bulk of the installation can be reused.

{duration: 50.01510691642761, flavor: basic,

owner: loic@dachary.org, success: true}

Why are by-partuuid symlinks missing or outdated ?

The ceph-disk script manages Ceph devices and rely on the content of the /dev/disk/by-partuuid directory which is updated by udev rules. For instance:

- a new partition is created with

/sbin/sgdisk --largest-new=1 --change-name=1:ceph data --partition-guid=1:83c14a9b-0493-4ccf-83ff-e3e07adae202 --typecode=1:89c57f98-2fe5-4dc0-89c1-f3ad0ceff2be -- /dev/loop4 - the kernel is notified of the change with partprobe or partx and fires a udev event

- the udev daemon receives UDEV [249708.246769] add /devices/virtual/block/loop4/loop4p1 (block) and the /lib/udev/rules.d/60-persistent-storage.rules script creates the corresponding symlink.

Let say the partition table is removed later (with sudo sgdisk --zap-all --clear --mbrtogpt -- /dev/loop4 for instance) and the kernel is not notified with partprobe or partx. If the first partition is created again and the kernel is notified as above, it will fail to notice any difference and will not send a udev event. As a result /dev/disk/by-partuuid will contain a symlink that is outdated.

The problem can be fixed by manually removing the stale symlink from /dev/disk/by-partuuid, clearing the partition table and notifying the kernel again. The events sent to udev can be displayed with:

# udevadm monitor ... KERNEL[250902.072077] change /devices/virtual/block/loop4 (block) UDEV [250902.100779] change /devices/virtual/block/loop4 (block) KERNEL[250902.101235] remove /devices/virtual/block/loop4/loop4p1 (block) UDEV [250902.101421] remove /devices/virtual/block/loop4/loop4p1 (block) ...

The environment and scripts used for a block device can be displayed with

# udevadm test /block/sdb/sdb1 ... udev_rules_apply_to_event: IMPORT '/sbin/blkid -o udev -p /dev/sdb/sdb1' /lib/udev/rules.d/60-ceph-partuuid-workaround.rules:28 udev_event_spawn: starting '/sbin/blkid -o udev -p /dev/sdb1' ...

How many PGs in each OSD of a Ceph cluster ?

To display how many PGs in each OSD of a Ceph cluster:

$ ceph --format xml pg dump | \

xmlstarlet sel -t -m "//pg_stats/pg_stat/acting" -v osd -n | \

sort -n | uniq -c

332 0

312 1

299 2

326 3

291 4

295 5

316 6

311 7

301 8

313 9

Where xmlstarlet loops over each PG acting set ( -m “//pg_stats/pg_stat/acting” ) and displays the OSDs it contains (-v osd), one by line (-n). The first column is the number of PGs in which the OSD in the second column shows.

To restrict the display to the PGs belonging to a given pool:

ceph --format xml pg dump | \ xmlstarlet sel -t -m "//pg_stats/pg_stat[starts-with(pgid,'0.')]/acting" -v osd -n | \ sort -n | uniq -c

Where 0. is the prefix of each PG that belongs to pool 0.

Gitlab CI runner installation

This content is obsolete

The instructions to install GitLab CI runner are adapted to Ubuntu 14.04 to connect to GitLab CI and run jobs when a commit is pushed to a branch. The recommended packages are installed except postfix and with ruby2.0 and ruby2.0-dev in addition:

sudo apt-get update -y sudo apt-get install -y wget curl gcc libxml2-dev libxslt-dev \ libcurl4-openssl-dev libreadline6-dev libc6-dev \ libssl-dev make build-essential zlib1g-dev openssh-server \ git-core libyaml-dev libpq-dev libicu-dev \ ruby2.0 ruby2.0-dev

Ruby2.0 is made the default ruby interpreter

sudo rm /usr/bin/ruby /usr/bin/gem /usr/bin/irb /usr/bin/rdoc /usr/bin/erb sudo ln -s /usr/bin/ruby2.0 /usr/bin/ruby sudo ln -s /usr/bin/gem2.0 /usr/bin/gem sudo ln -s /usr/bin/irb2.0 /usr/bin/irb sudo ln -s /usr/bin/rdoc2.0 /usr/bin/rdoc sudo ln -s /usr/bin/erb2.0 /usr/bin/erb sudo gem update --system sudo gem pristine --all

The bundler gem is installed

sudo gem install bundler

and the GitLab CI runner user created

sudo adduser --disabled-login --gecos 'GitLab CI Runner' gitlab_ci_runner

The GitLab CI runner code is installed in the home of the corresponding user with:

sudo su gitlab_ci_runner cd ~/ git clone https://gitlab.com/gitlab-org/gitlab-ci-runner.git cd gitlab-ci-runner bundle install --deployment

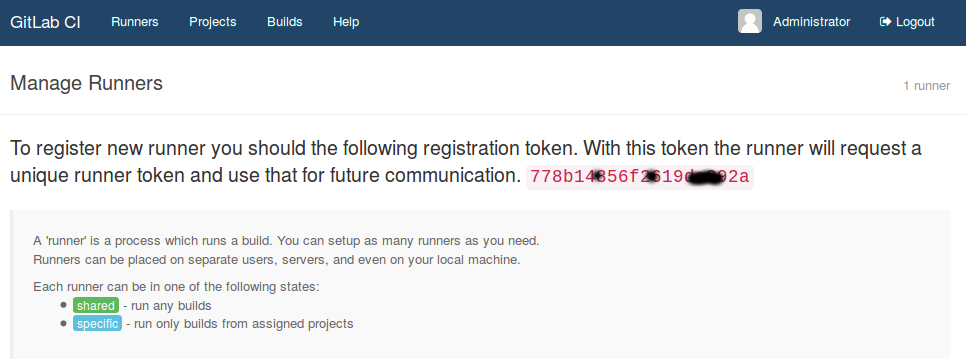

The CI token is retrieved from the GitLab CI pannel

and used to grant access to the runner:

CI_SERVER_URL=http://workbench.dachary.org:8080 \ REGISTRATION_TOKEN=778b1d4856f26da392a bundle exec ./bin/setup

The daemon is started from root with:

su gitlab_ci_runner -c 'cd $HOME/gitlab-ci-runner ; bundle exec ./bin/runner'

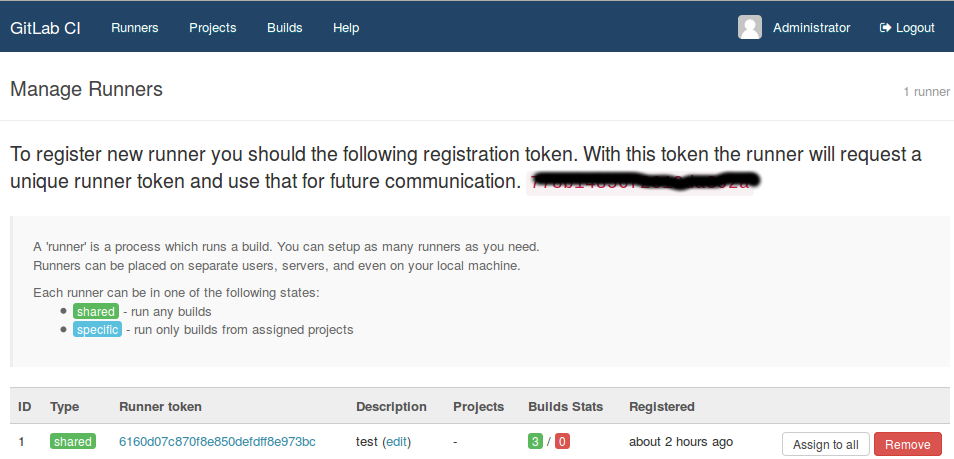

The GitLab CI interface shows the runner as registered:

Assuming all the above was done from within a docker container, it can be persisted as an image with

docker commit b504ab6ba122 gitlab-runner

and used to multiply the runners with:

$ docker run --rm -t gitlab-runner \ su gitlab_ci_runner -c 'cd $HOME/gitlab-ci-runner ; \ CI_SERVER_URL=http://workbench.dachary.org:8080 \ REGISTRATION_TOKEN=b14852619da392a \ bundle exec ./bin/setup ; bundle exec ./bin/runner' Registering runner with registration token: 2619da3, url: http://workbench.dachary.org:8080. Runner token: 35f9d40f2e072487870f987 Runner registered successfully. Feel free to start it! * Gitlab CI Runner started * Waiting for builds 2014-12-06 17:18:26 +0000 | Checking for builds...nothing 2014-12-06 17:20:27 +0000 | Checking for builds...received 2014-12-06 17:20:27 +0000 | Starting new build 6... 2014-12-06 17:20:27 +0000 | Build 6 started. 2014-12-06 17:20:32 +0000 | Submitting build 6 to coordinator...ok 2014-12-06 17:20:33 +0000 | Completed build 6, success. 2014-12-06 17:20:33 +0000 | Submitting build 6 to coordinator...aborted 2014-12-06 17:20:38 +0000 | Checking for builds...nothing ...

When the container is stopped, the runner must be manually removed from the GitLab CI. Projects in the GitLab CI will be confused by the disapearance of the runner and must be removed and re-added otherwise no job will get scheduled.

It is easier to install on Fedora 20

sudo gem install bundler sudo useradd -c 'GitLab CI Runner' gitlab_ci_runner export PATH=/usr/local/bin:$PATH cd ~/ git clone https://gitlab.com/gitlab-org/gitlab-ci-runner.git cd gitlab-ci-runner bundle install --deployment CI_SERVER_URL=http://workbench.dachary.org:8080 \ REGISTRATION_TOKEN=XXXXX bundle exec ./bin/setup

Gitlab CI installation

Assuming a GitLab container has been installed via Docker, a GitLab CI can be installed and associated with it. It needs a separate database server:

sudo mkdir -p /opt/mysql-ci/data docker run --name=mysql-ci -d -e 'DB_NAME=gitlab_ci_production' \ -e 'DB_USER=gitlab_ci' \ -e 'DB_PASS=XXXXX' \ -v /opt/mysql-ci/data:/var/lib/mysql sameersbn/mysql:latest

but it can re-use the redis server from GitLab.

Create a new application in GitLab (name it GitLab CI for instance but the name does not matter) and set the callback URL to http://workbench.dachary.org:8080/user_sessions/callback. It will display an application id to use for GITLAB_APP_ID below and a secret to use for GITLAB_APP_SECRET below.

docker pull sameersbn/gitlab-ci sudo mkdir -p /opt/gitlab-ci/data pw1=$(pwgen -Bsv1 64) ; echo $pw1 pw2=$(pwgen -Bsv1 64) ; echo $pw2 docker run --name='gitlab-ci' -it --rm \ --link mysql-ci:mysql \ --link redis:redisio \ -e 'GITLAB_URL=http://workbench.dachary.org' \ -e 'SMTP_ENABLED=true' \ -e 'SMTP_USER=' \ -e 'SMTP_HOST=172.17.42.1' \ -e 'SMTP_PORT=25' \ -e 'SMTP_STARTTLS=false' \ -e 'SMTP_OPENSSL_VERIFY_MODE=none' \ -e 'SMTP_AUTHENTICATION=:plain' \ -e 'GITLAB_CI_PORT=8080' \ -e 'GITLAB_CI_HOST=workbench.dachary.org' \ -e "GITLAB_CI_SECRETS_DB_KEY_BASE=$pw1" \ -e "GITLAB_CI_SECRETS_SESSION_KEY_BASE=$pw2" \ -e "GITLAB_APP_ID=feafd' \ -e 'GITLAB_APP_SECRET=ffa8b' \ -p 8080:80 \ -v /var/run/docker.sock:/run/docker.sock \ -v /opt/gitlab-ci/data:/home/gitlab_ci/data \ -v $(which docker):/bin/docker sameersbn/gitlab-ci

It uses port 8080 because port 80 is already in use by GitLab. The SMTP* are the same as when GitLab was installed.

The user and password are the same as with the associated GitLab.

Copy a github pull request to gitlab

A mirror of a github repository is setup and contains two remotes:

gitlab git@workbench.dachary.org:tests/testrepo.git (push) origin https://github.com/loic-bot/testrepo (push)

The github2gitlab command of gh (run from ~gitmirrors/repositories/Tests/testrepo) creates a merge request in gitlab by copying the designated pull request from github:

$ gh gg --user loic-bot --repo testrepo --number 3

Continue reading “Copy a github pull request to gitlab”