End of last year, a new puppet-ceph module was bootstrapped with the ambitious goal to re-unite the dozens of individual efforts. I’m very happy with what we’ve accomplished. We are making progress although our community is mixed, but more importantly, we do things differently.

Continue reading “puppet-ceph update”

Ceph erasure code jerasure plugin benchmarks (Highbank ARMv7)

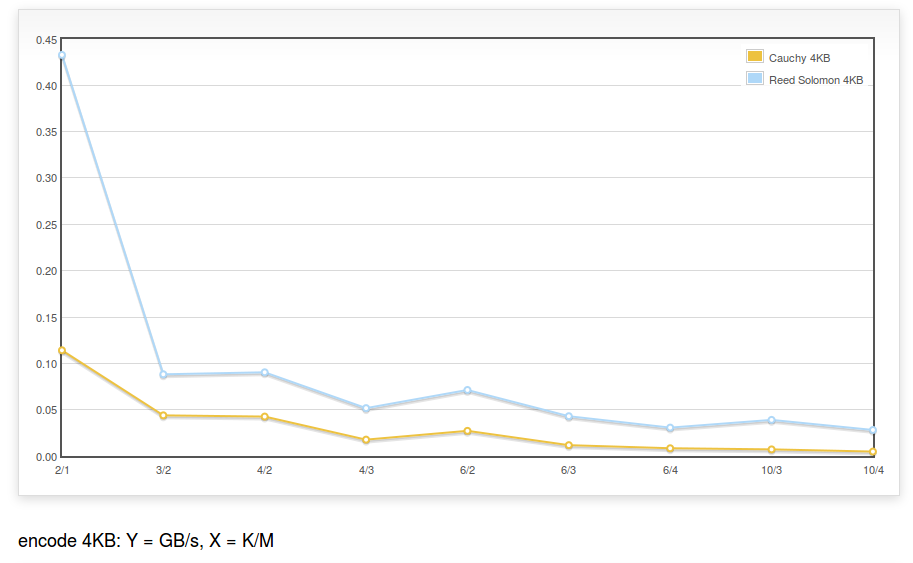

The benchmark described for Intel Xeon is run with a Highbank ARMv7 Processor rev 0 (v7l) processor (the maker of the processor was Calxeda ), using the same codebase:

The encoding speed is ~450MB/s for K=2,M=1 (i.e. a RAID5 equivalent) and ~25MB/s for K=10,M=4.

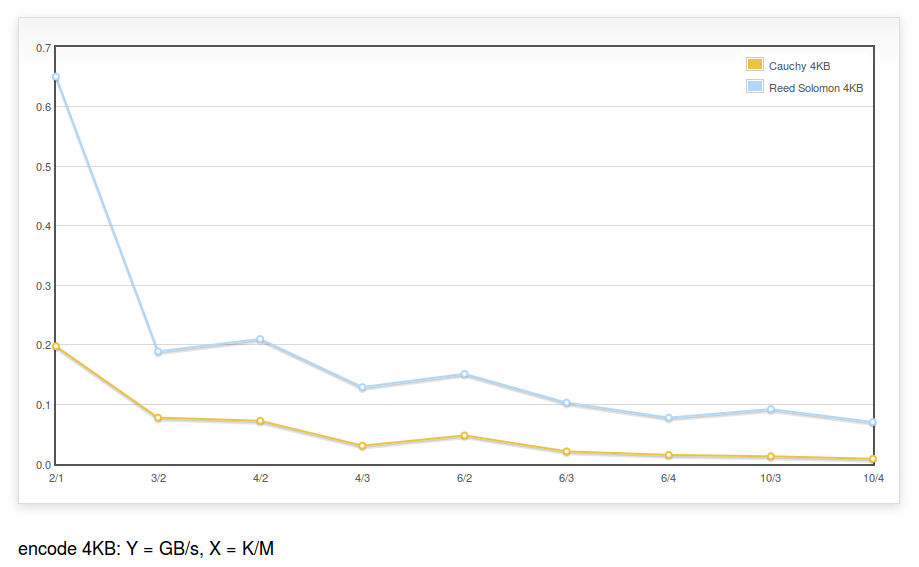

It is also run with Highbank ARMv7 Processor rev 2 (v7l) (note the 2):

The encoding speed is ~650MB/s for K=2,M=1 (i.e. a RAID5 equivalent) and ~75MB/s for K=10,M=4.

Note: The code of the erasure code plugin does not contain any NEON optimizations.

Continue reading “Ceph erasure code jerasure plugin benchmarks (Highbank ARMv7)”

workaround DNSError when running teuthology-suite

Note: this is only useful for people with access to the Ceph lab.

When running a Ceph integration tests using teuthology, it may fail because of a DNS resolution problem with:

$ ./virtualenv/bin/teuthology-suite --base ~/software/ceph/ceph-qa-suite \ --suite upgrade/firefly-x \ --ceph wip-8475 --machine-type plana \ --email loic@dachary.org --dry-run 2014-06-27 INFO:urllib3.connectionpool:Starting new HTTP connection (1): ... requests.exceptions.ConnectionError: HTTPConnectionPool(host='gitbuilder.ceph.com', port=80): Max retries exceeded with url: /kernel-rpm-centos6-x86_64-basic/ref/testing/sha1 (Caused by: [Errno 3] name does not exist)

It may be caused by DNS propagation problems and pointing to the ceph.com may work better. If running bind, adding the following in /etc/bind/named.conf.local will forward all ceph.com related DNS queries to the primary server (NS1.DREAMHOST.COM i.e. 66.33.206.206), assuming /etc/resolv.conf is set to use the local DNS server first:

zone "ceph.com."{

type forward ;

forward only ;

forwarders { 66.33.206.206; } ;

};

zone "ipmi.sepia.ceph.com" {

type forward;

forward only;

forwarders {

10.214.128.4;

10.214.128.5;

};

};

zone "front.sepia.ceph.com" {

type forward;

forward only;

forwarders {

10.214.128.4;

10.214.128.5;

};

};

The front.sepia.ceph.com zone will resolve machine names allocated by teuthology-lock and used as targets such as:

targets: ubuntu@saya001.front.sepia.ceph.com: ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABA ... 8r6pYSxH5b

Locally repairable codes and implied parity

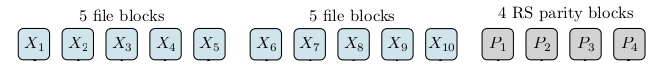

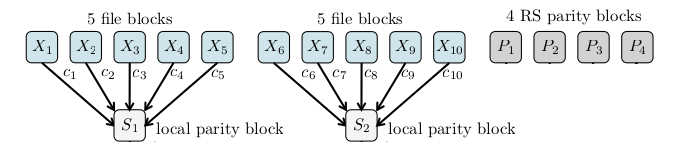

When a Ceph OSD is lost in an erasure coded pool, it can be recovered using the others.

For instance if OSD X3 was lost, OSDs X1, X2, X4 to X10 and P1 to P4 are retrieved by the primary OSD and the erasure code plugin uses them to rebuild the content of X3.

Locally repairable codes are designed to lower the bandwidth requirements when recovering from the loss of a single OSD. A local parity block is calculated for each five blocks : S1 and S2. When the X3 OSD is lost, instead of retrieving blocks from 13 OSDs, it is enough to retrieve X1, X2, X4, X5 and S1, that is 5 OSDs.

In some cases, local parity blocks can help recover from the loss of more blocks than any individual encoding function can. In the example above, let say five blocks are lost: X1, X2, X3, X4 and X8. The block X8 can be recovered from X6, X7, X9, X10 and S2. Now that only four blocks are missing, the initial parity blocks are enough to recover. The combined effect of local parity blocks and the global parity blocks acts as if there was implied parity block.

Ceph erasure code jerasure plugin benchmarks

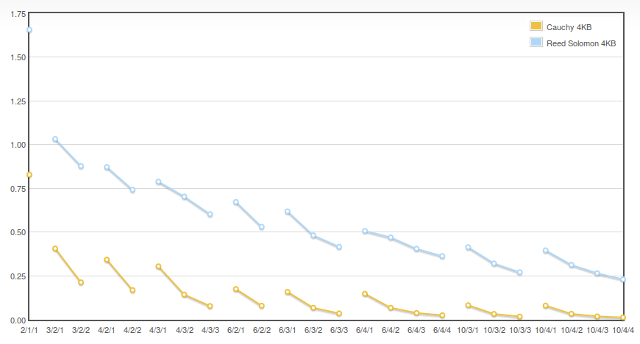

On a Intel(R) Xeon(R) CPU E5-2630 0 @ 2.30GHz processor (and all SIMD capable Intel processors) the Reed Solomon Vandermonde technique of the jerasure plugin, which is the default in Ceph Firefly, performs better.

The chart is for decoding erasure coded objects. Y are in GB/s and the X are K/M/erasures. For instance 10/3/2 is K=10,M=3 and 2 erasures, meaning each object is sliced in K=10 equal chunks and M=3 parity chunks have been computed and the jerasure plugin is used to recover from the loss of two chunks (i.e. 2 erasures).

Continue reading “Ceph erasure code jerasure plugin benchmarks”

Create a partition and make it an OSD

Note: it is similar to Creating a Ceph OSD from a designated disk partition but simpler.

In a nutshell, to use the remaining space from /dev/sda and assuming Ceph is already configured in /etc/ceph/ceph.conf it is enough to:

$ sgdisk --largest-new=$PARTITION --change-name="$PARTITION:ceph data" \ --partition-guid=$PARTITION:$OSD_UUID \ --typecode=$PARTITION:$PTYPE_UUID -- /dev/sda $ partprobe $ ceph-disk prepare /dev/sda$PARTITION $ ceph-disk activate /dev/sda$PARTITION

Recovering from a cinder RBD host failure

OpenStack Havana Cinder volumes associated with a RBD Ceph pool are bound to a host.

cinder service-list --host bm0014.the.re@rbd-ovh +---------------+-----------------------+------+---------+-------+ | Binary | Host | Zone | Status | State | +---------------+-----------------------+------+---------+-------+ | cinder-volume | bm0014.the.re@rbd-ovh | ovh | enabled | up | +---------------+-----------------------+------+---------+-------+

A volume created on this host is permanently associated with it:

$ mysql -e "select host from volumes where deleted = 0 and display_name = 'nerrant.fr'" cinder +-----------------------+ | host | +-----------------------+ | bm0014.the.re@rbd-ovh | +-----------------------+

If the host fails, any attempt to detach the volume will fail because the cinder-api cannot reach the host:

/var/log/cinder/cinder-api.log 2014-05-04 17:50:59.928 15128 TRACE cinder.api.middleware.fault Timeout: Timeout while waiting on RPC response - topic: "cinder-volume:bm0014.the.re@rbd-ovh", RPC method: "terminate_connection" info: ""

The failed cinder host is first disabled so the scheduler will no longer try to access it:

cinder service-disable bm0014.the.re cinder-volume

The database is updated with another host configured with access to the same Ceph pool.

$ mysql -e "update volumes set host = 'bm0015.the.re@rbd-ovh' \ where deleted = 0 and display_name = 'nerrant.fr'" cinder

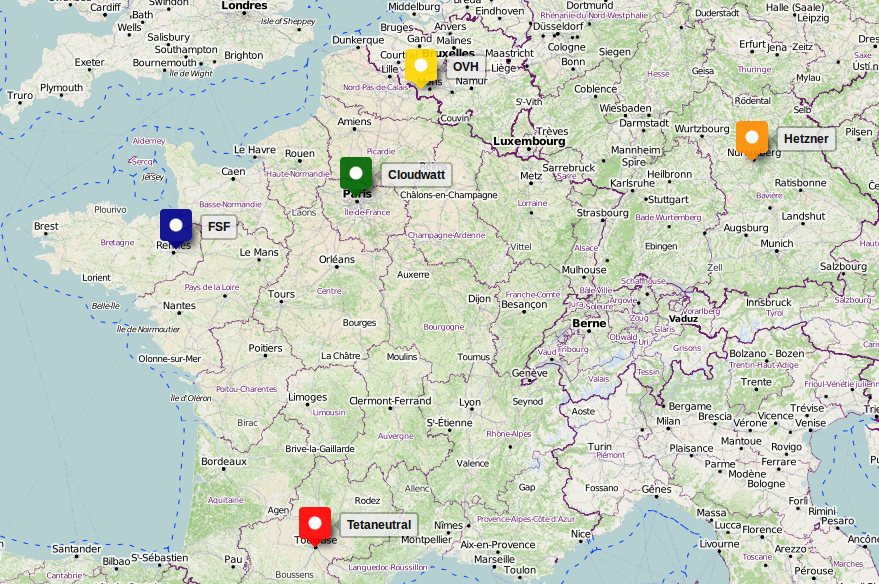

Non profit OpenStack & Ceph cluster distributed over five datacenters

A few non profit organizations (April, FSF France, tetaneutral.net…) and volunteers constantly research how to get compute, storage and bandwidth that are:

- 100% Free Software

- Content neutral

- Low maintenance

- Reliable

- Cheap

The latest setup, in use since ocbober 2013, is based on a Ceph and OpenStack cluster spread over five datacenters. It has been designed for the following use cases:

- Free Software development and continuous integration

- Hosting low activity web sites, mail servers etc.

- Keeping backups

- Sharing movies and music

Continue reading “Non profit OpenStack & Ceph cluster distributed over five datacenters”

Sharing hard drives with Ceph

A group of users give hard drives to the system administrator of the Ceph cluster. In exchange, each of them get credentials to access a dedicated pool of a given size from the Ceph cluster.

Continue reading “Sharing hard drives with Ceph”

Celebrate Firefly and Icehouse

If you’re in Atlanta Sunday 11th, may 2014 evening, for the OpenStack summit or any other reason, join us to celebrate the OpenStack Icehouse release and the Ceph Firefly release. There will be both OpenStack and Ceph developers present and no presentations planned : it’s 100% informal 🙂

Registration is here : Celebrate Firefly and Icehouse meetup page.